One of the things about new platforms is you get to learn new technology. One of the bad things about new technology is that a lot of your old methods might not apply anymore, need to be revamped, or redesigned completely. Over the last few months I’ve been working on a Cisco UCS platform deployment for work. This has been quite exciting as it’s all new gear, and it’s implementing stuff that we should have been able to implement with our HP BladeSystem C7000 gear.

One of the biggest gotchas so far is that the build image we have for our machines no longer works. The Cisco UCS B200 servers have hardware that isn’t detected out of the box with Windows 2012R2. This means we have to inject drivers into the boot image, and the install image, to make the install work when using Boot From SAN (BFS).

This post is a reflective for myself, and others that might find it handy, because I’m constantly forgetting how to update the drivers in boot images. One thing I’m very thankful for, the new ImageX format that Microsoft started using with Windows Vista. This makes image management so very much easier.

Preparing your build environment

First step is to install Microsoft ADK (Assessment and Deployment Kit). When you run the install, you only need to install the 2 deployment and build packages.

The next step is to prepare the build environment. I have a secondary drive in my desktop, so I built the following structure:

F:\

|-Build

|-ISO

|-Windows

|-Drivers

|-Network

|-Storage

|-Chipset

You’ll need a valid copy of Windows 2012R2. If you have it on DVD, simply copy the contents of the DVD into your Windows folder as I have documented above. You’ll also need a copy of the driver CD from the vendor. As this is for the Cisco UCS B200, you need a login, and you can find them tucked here.

Find the drivers you need on the DVD, in my case the Network and Storage drivers were easy as there were only one in the named folders. The chipset was a little difficult because a clean install of Windows didn’t detect the chipset, but pointing the Windows at the driver DVD found all the drivers, and then the device said no special drivers were needed so refused to list any. After some fudging around, I managed to identify these as Intel’s Ivytown drivers, so dropped those in the Chipset folder.

Identifying Install Image and Injecting Drivers

This is where all the magic happens. We’re going to inject the drivers into the image, or slipstream them as it’s called in some places. This is done with just a handful of commands. The first thing we need to do is identify which install image we want to work with. A standard Volume License Windows 2012R2 DVD has 4 install images, standard core, standard with GUI, datacenter core, and datacenter with GUI. As we usually build GUI based boxes, we’re only interested in editing those images for now. Launching a PowerShell prompt with elevated access, we need to list the contents of the install iamge:

F:\Build>dism /Get-ImageInfo /ImageFile:.\Windows\Sources\install.wim

Deployment Image Servicing and Management tool

Version: 6.3.9600.16384

Details for image : .\Windows\Sources\install.wim

Index : 1

Name : Windows Server 2012 R2 SERVERSTANDARDCORE

Description : Windows Server 2012 R2 SERVERSTANDARDCORE

Size : 6,674,506,847 bytes

Index : 2

Name : Windows Server 2012 R2 SERVERSTANDARD

Description : Windows Server 2012 R2 SERVERSTANDARD

Size : 11,831,211,505 bytes

Index : 3

Name : Windows Server 2012 R2 SERVERDATACENTERCORE

Description : Windows Server 2012 R2 SERVERDATACENTERCORE

Size : 6,673,026,597 bytes

Index : 4

Name : Windows Server 2012 R2 SERVERDATACENTER

Description : Windows Server 2012 R2 SERVERDATACENTER

Size : 11,820,847,585 bytes

The operation completed successfully.

As you can see, we have 4 images here, 2 are core (1 and 3) so we’ll ignore those and just work on editing the images we need.

F:\Build>dism /Mount-Image /ImageFile:.\Windows\Sources\install.wim /MountDir:.\ISO /Index:2

Deployment Image Service and Management Tool

Version: 6.3.9600.16384

Mounting Image

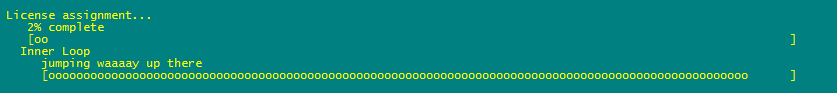

[==================52.0% ]

This bit can take a few minutes. Once mounted, if you open Windows explorer to F:\Build\ISO you’ll see a full drive map of an installed Windows machine. This is where we’re going to inject drivers.

F:\Build>dism /Image:.\ISO /Add-Driver:.\Drivers /recurse

After a few minutes, you’ll get a nice report of the drivers being added. If you have any unsigned drivers, you can add /ForceUnsigned to the end to make it skip signature validation.

Now we save the updated image, and close it out.

F:\Build>dism /Unmount-Image /MountDir:.\ISO /commit

The /commit forces it to save the changes. If you don’t want to save, use /discard.

We repeated the same steps above, but changed /Index:2 to /Index:4 to mount the datacenter edition of Windows.

Adding Features to the Install Image

One of the other things that we needed to do so we could save a step later was enable features in the new build. Again, dism can handle this by toggling the flag and enabling the features. We wanted MPIO enabled because the B200 has 2 paths due to the chassis they are connected in.

F:\Build>dism /Mount-Image /ImageFile:.\Windows\Sources\install.wim /MountDir:.\ISO /Index:2

F:\Build>dism /Image:.\ISO /Enable-Feature /FeatureName:MultipathIo

F:\Build>dism /Unmount-Image /MountDir:.\ISO /commit

Technically you can save some time by enabling the features at the same time you are injecting the drivers. I’ve just got them separated here.

Adding drivers to Setup and WinPE Images

Adding the drivers to the install image isn’t the end of it. If you’re working with hardware that’s not supported out the box (like the Cisco VICs), then you need to add the drivers to the Setup and WinPE images to make sure they can both see the drives that may be presented from another source (SAN for example). The steps are identical to above, except the image we’re targetting:

F:\Build>dism /Get-ImageInfo /ImageFile:.\Windows\Sources\boot.wim

Deployment Image Servicing and Management tool

Version: 6.3.9600.16384

Details for image : .\Windows\Sources\boot.wim

Index : 1

Name : Microsoft Windows PE (x64)

Description : Microsoft Windows PE (x64)

Size : 1,321,549,982 bytes

Index : 2

Name : Microsoft Windows Setup (x64)

Description : Microsoft Windows Setup (x64)

Size : 1,417,514,940 bytes

The operation completed successfully.

F:\Build>dism /Mount-Image /ImageFile:.\Windows\Sources\boot.wim /MountDir:.\ISO /Index:1

F:\Build>dism /Image:.\ISO /Add-Driver:.\Drivers /recurse

F:\Build>dism /Unmount-Image /MountDir:.\ISO /commit

F:\Build>dism /Mount-Image /ImageFile:.\Windows\Sources\boot.wim /MountDir:.\ISO /Index:2

F:\Build>dism /Image:.\ISO /Add-Driver:.\Drivers /recurse

F:\Build>dism /Unmount-Image /MountDir:.\ISO /commit

Now our setup image can see the devices that may be required for disk access.

Building the ISO Image

The final step is to turn all this hardwork into a useable ISO/DVD. This is where the ADK comes into play. You’ll need to launch an elevated command prompt using the “Deployment and Imaging Tools Environment” prompt. This sets the %PATH% variables to include some additional tools. We then navigate back to our build directory and start the build process.

C:\>F:

F:\>cd Build

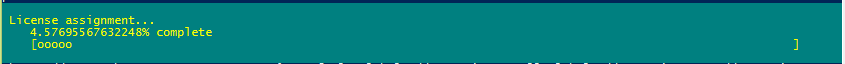

F:\Build>oscdimg -u2 -bf:\build\windows\boot\etfsboot.com f:\build\windows f:\build\win2012r2_b200_20150312.iso

This take a few minutes as it’s making a new ISO. The -u2 argument is used to force UDF file system. This is needed otherwise the install.wim and some other items get trashed by sizing limitations.

Once you have an ISO file, you can either use your favourite ISO burning utility to put it on DVD, or use your servers KVM/ILO/DRAC to remotely mount it to do the install.

All in all, the process takes about 30 minutes depending on the speed of your machine, disks, and drivers/features being enabled. Sadly it took me nearly 2 days to actually build the final image because I had issues identying and including the right chipset drivers.

]]>